Project information

- Course: COMP 4180

- Name: Mobile Robotics

Description

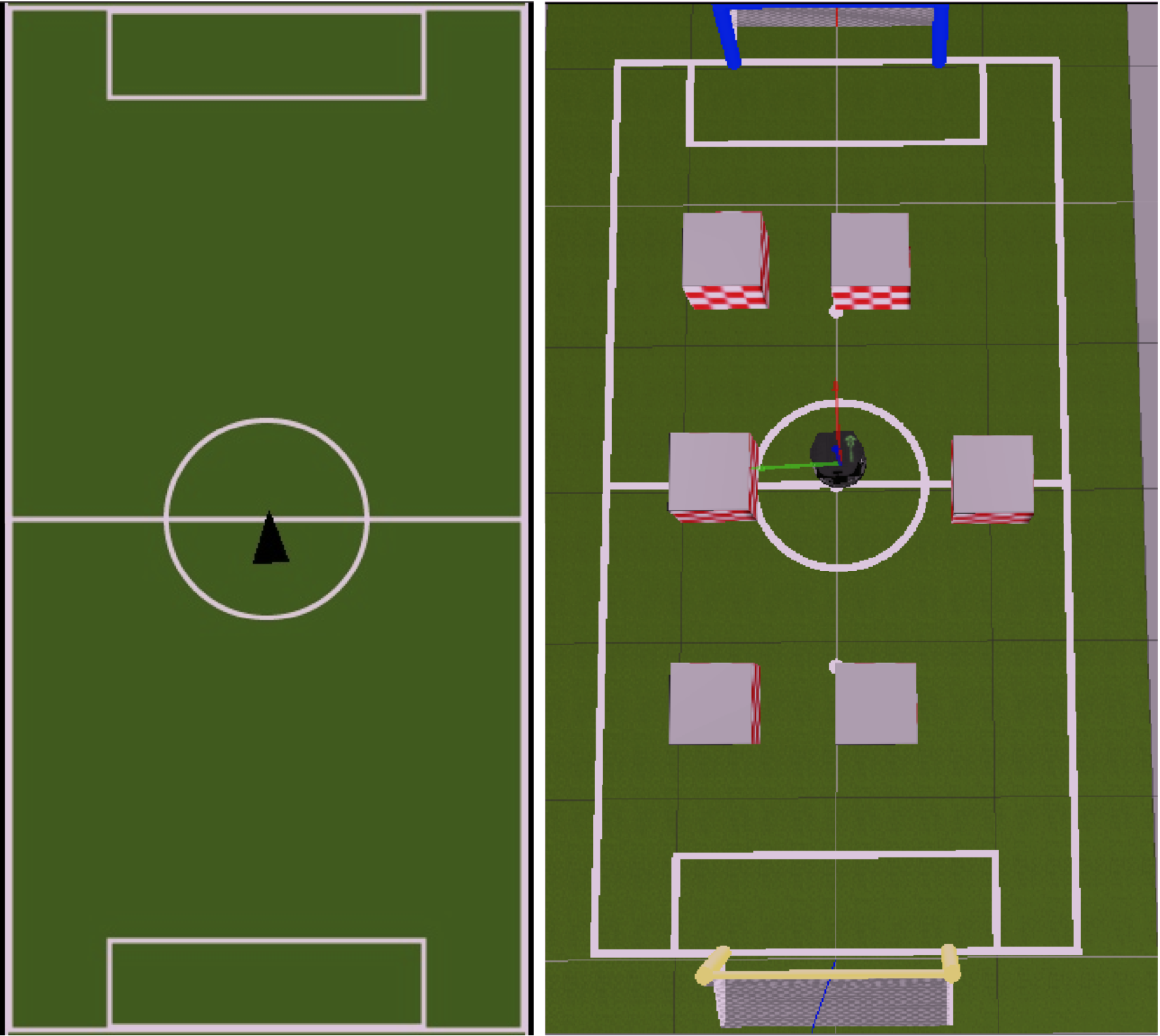

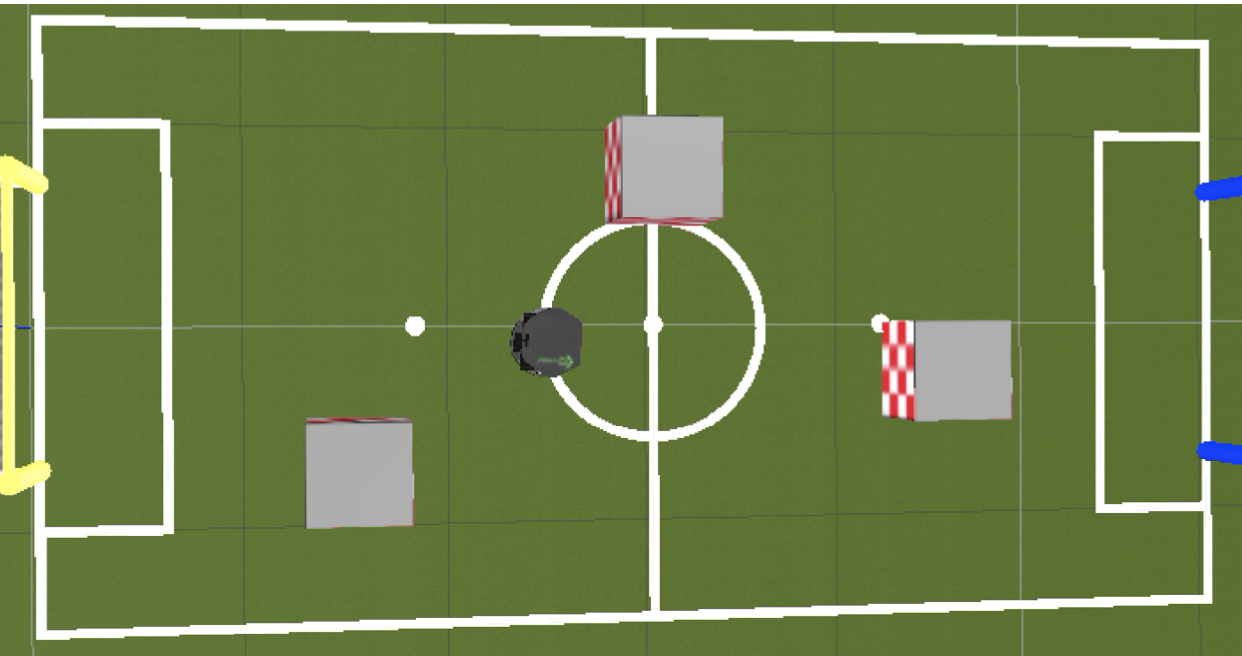

In this project I used Computer Vision techniques to map out the soccer field including the goal posts, the lines, the obstacles, and the robots location and direction. I then used an IBVS module to allow my Turtlebot (named Emmett) to navigate the soccer field autonomously avoiding the obstacles and reaching the goal.

Feature Outline

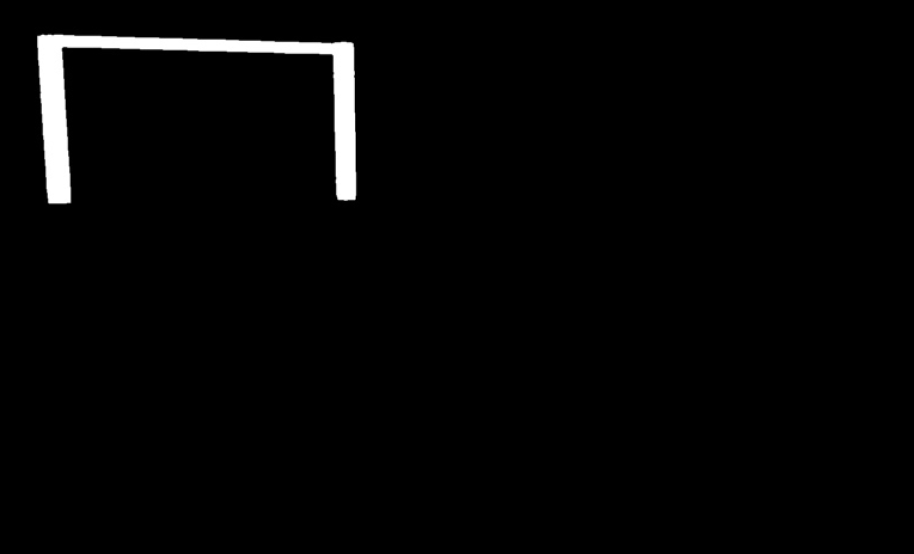

Feature 1 – Goal Detection- Description: I used a simple mask to get the goal post. In order to find the center of the goal post I used opening to get rid of the noise then defined the contours and then took a convex hull of the contours which gave us the goal area.

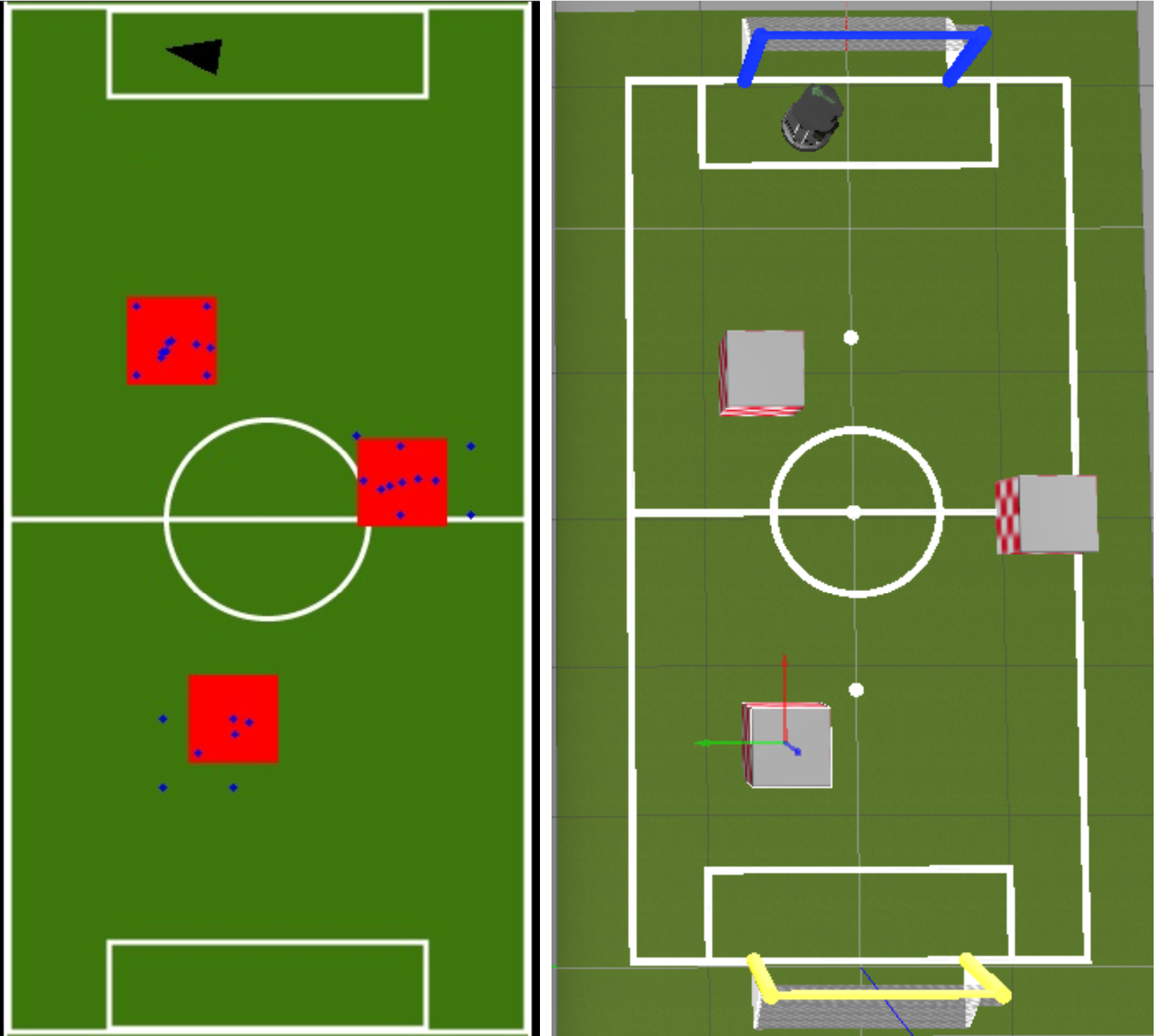

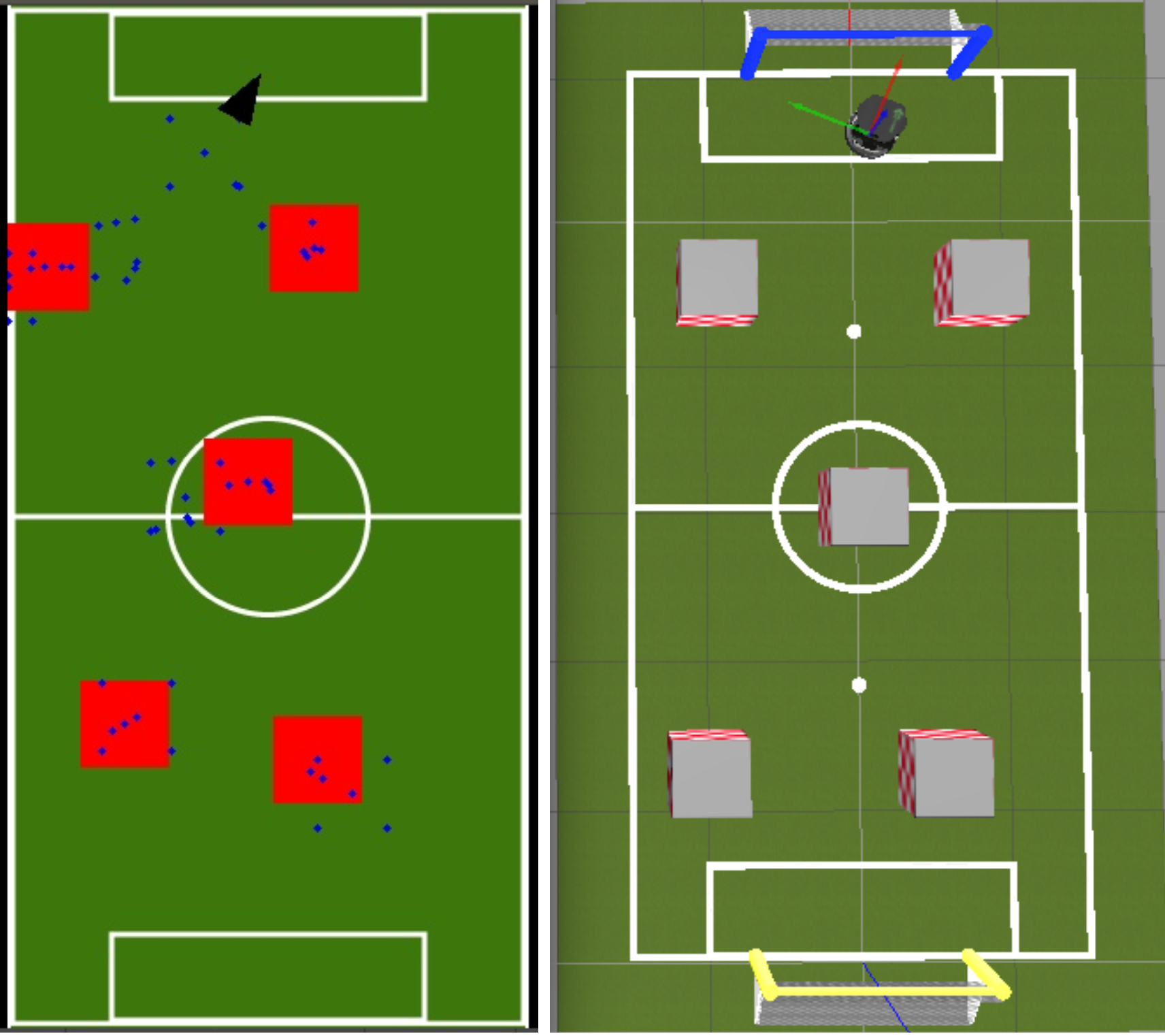

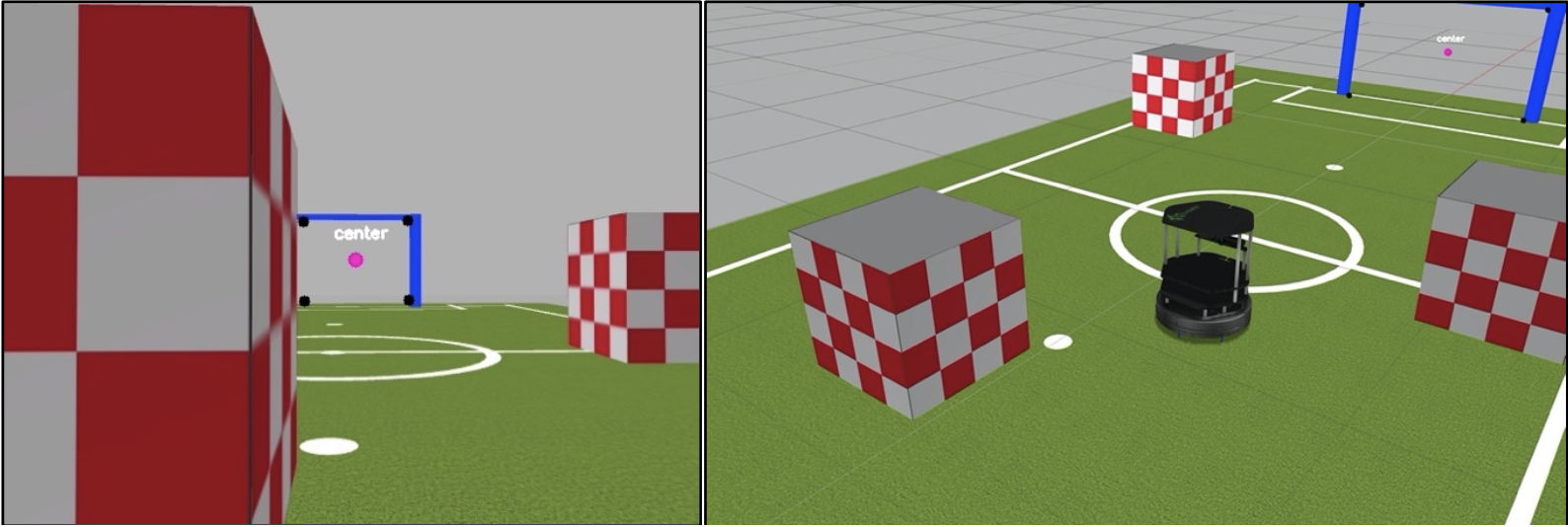

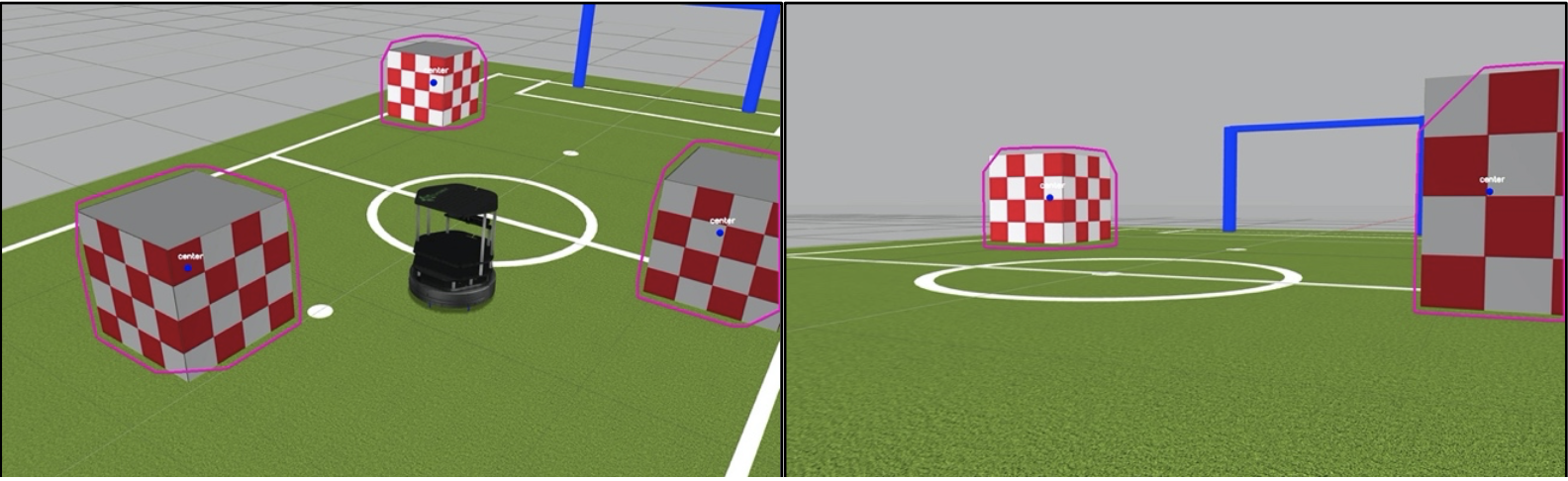

- Description: Similar to goal detection I used a mask to get the general shape of the obstacle and then found the contours of the resulting image and then got the convex hull. Then I used minAreaRect to get a general approximation of the boundaries and the center point of the obstacle.

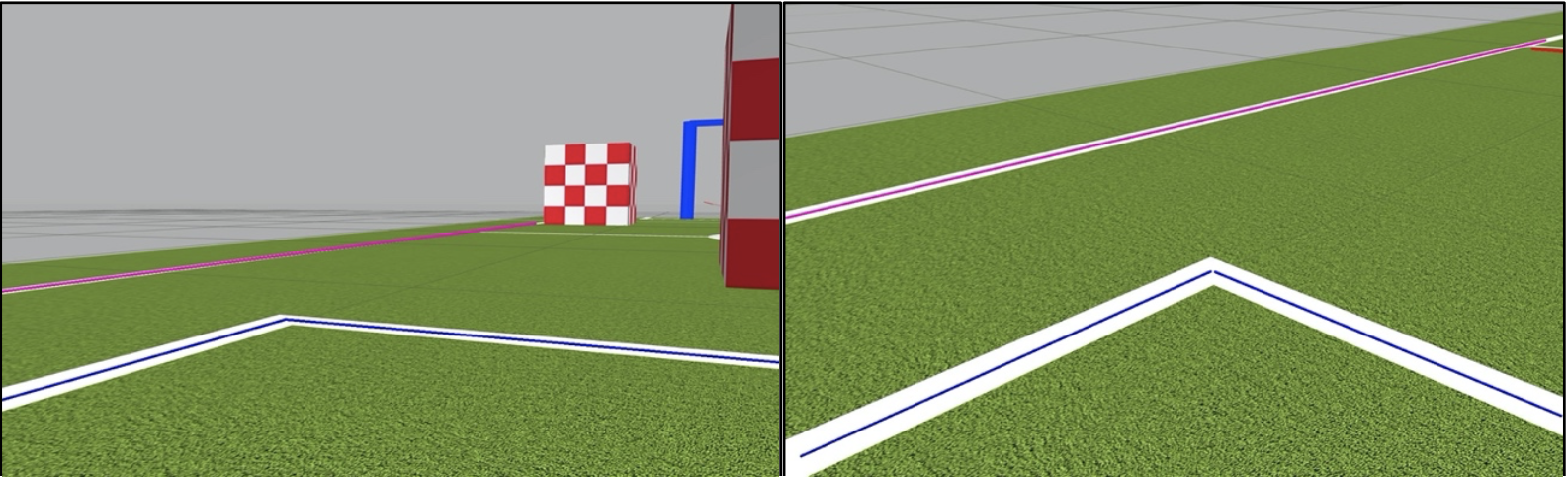

- Description: I removed all the obstacles and goal posts so all I see if the field and the lines. Then I apply eroding and dilation to remove any noise and tehn run fast line detector on the mask image to detect the field lines. I also did some very basic estimation of what the different lines were (inner lines or boundary) and colour coordinated them accordingly.

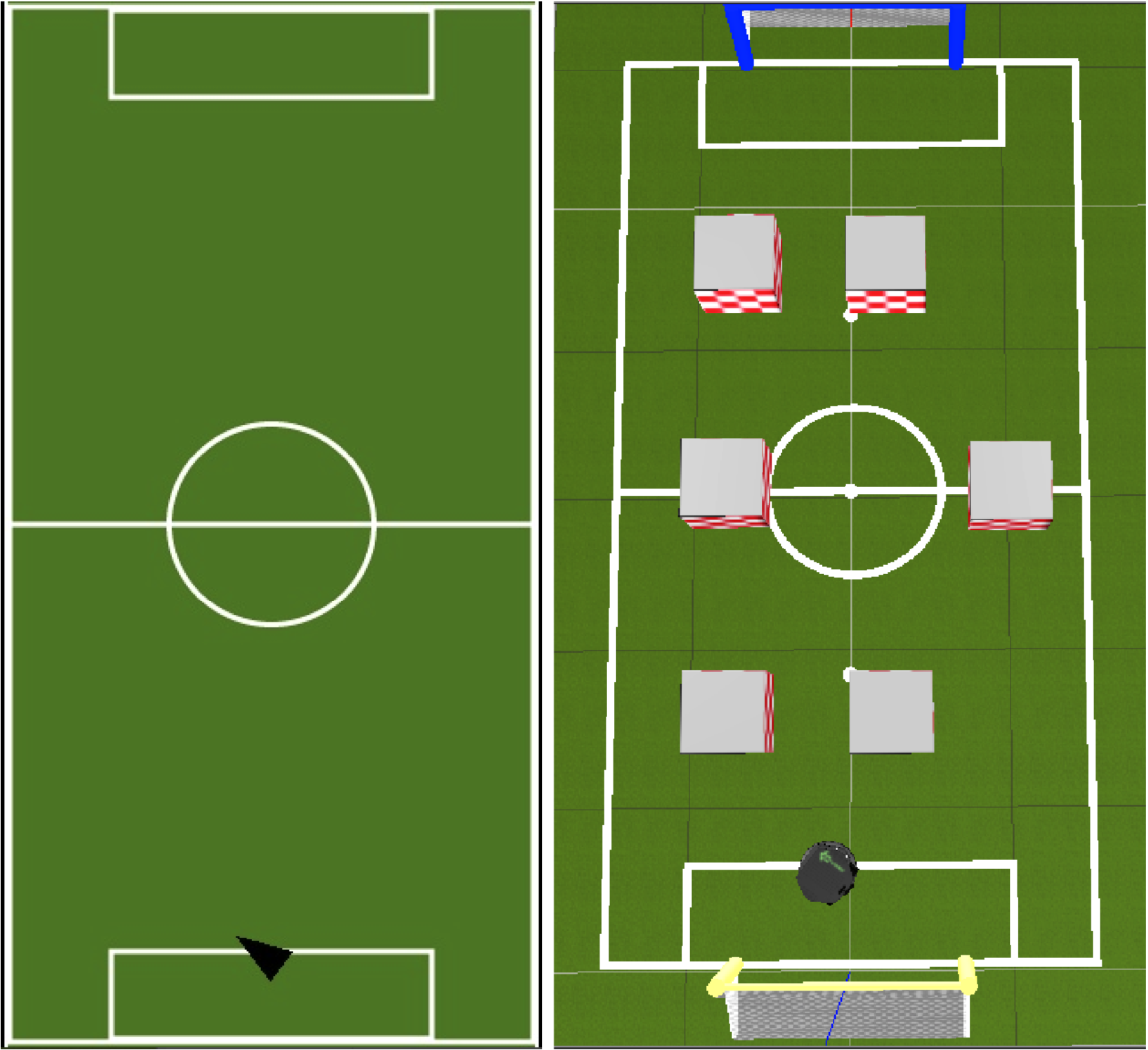

- Description: Using the positions of obstacles and the distance they are from the robot I will adjust the robots path to avoid them. If there are no objects in sight I will set my path towards the goal.

- Description: Since we already know the approximate center of the goal, when there are no objects in sight I just adjust my path towards it. Once I get close to the goal I won't be able to see it so I move forward a preset amount and then stop.

- Description: If we can't see the goals or any obstacle then I resort to trying to navigate using the boundary lines. Based off the gradient of the boundary lines I will turn to try to make sure I am facing inwards towards the field. I make the assumption that if I can't see any goal, boundary lines or obstacle that I must be facing outward or am off the field so I do a hard random turn in order to try to see an obstacle or goal which then can properly guide the robot.

- Description: Estimate the robot's location and direction on the field map using its velocities. I have some tricks that adjust the localization based off what we see such as the center lines or the goal posts to try to make the estimation more accurate.

- Description: I used particle filtering SLAM to upade the mapping for obstacles. The rectangle was drawn around the particle position with the highest probability at each increment of time. The position would update as the robot moved and the predictions got more accurate.